Evaluate annotations

You can compare your project to any other project. For example, you can use this function to evaluate automatically produced annotations, e.g. your project, to manual annotations.

Using Browser GUI

- Go to your project page.

- top > projects > your_project

- If you are logged in, you will find the Evaluations button in the pane, Annotations.

Click the button to open the Evaluations page of your project. - In the Evaluations page, click the create button to create a new evaluation.

- In the New evaluation form,

- Choose the reference project, against which you want to compare project.

- Choose an evaluator (currently, only one, PubAnnotationGeneric, is available).

- Click the ‘Create evaluation’ button, to complete creating an evaluation.

- Open the evaluation page by clicking the correspondong show button.

- You will find that evaluation result is not yet available.

- Click the ‘Generate’ button to generate the evaluation result.

- Wait for a few minues, and reload the page to find the result.

Comparison Metric

Currently, only one annotator, PubAnnotationGeneric is available. Please refer to the descriptions on the github page of the source code.

Example

bionlp-st-ge-2016-reference-eval is a project cretaed to show the evaluation function of PubAnnotation.

Now, the homepage of a project shows the menu item, Evaluations. Clicking it opens a page with a list of evaluations.

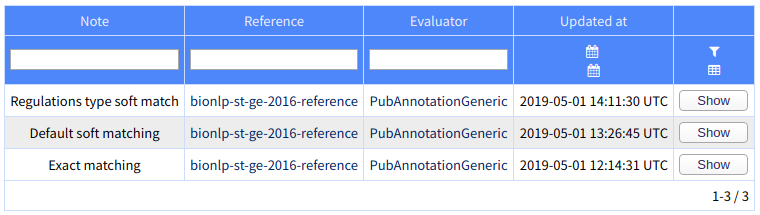

In the project, bionlp-st-ge-2016-reference-eval, three evaluations are created as examples

All the three evlauations use the same evaluation tool, PubAnnotationGeneric, but with different settings.

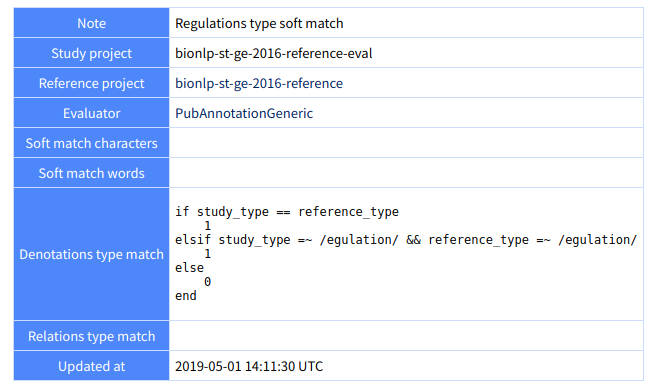

Below shows the property setting of the first evaluation, which is configured to use a custom matching algorithm when comparing types of denotations:

For details of the settings, please refer to the github page.

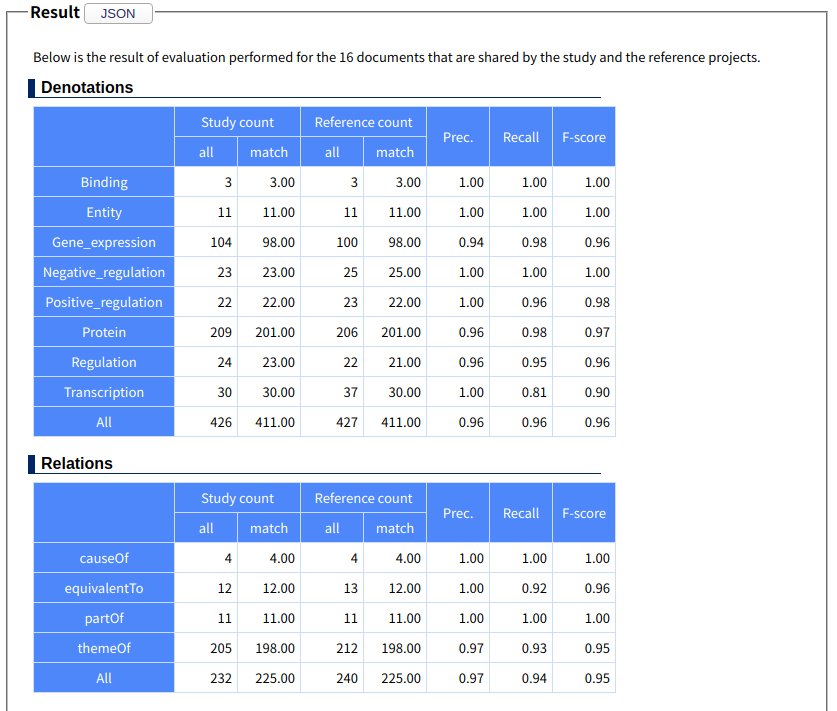

The evaluation result will be shown in precision / recall / f-score as below:

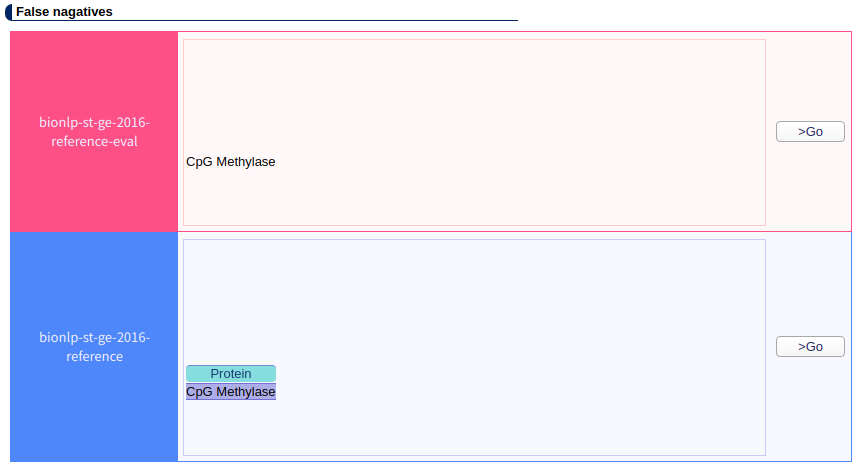

The false positives and false negatives also can be accessed as below: